当前位置:网站首页>Pytorch deep learning practice (3)

Pytorch deep learning practice (3)

2022-04-23 22:02:00 【Know what you know and slowly understand what you don't know】

Random gradient descent method

import numpy as np

import matplotlib.pyplot as plt

x_data=[1.0,2.0,3.0]

y_data=[2.0,4.0,6.0]

w=1.0

def forward(x):

return x*w

def cost(xs,ys):

cost=0

for x,y in zip(xs,ys):

y_pred=forward(x)

cost+=(y_pred-y)**2

return cost/len(xs)

def gradient(xs,ys):

grad=0

for x,y in zip(xs,ys):

grad+=2*x*(x*w-y)

return grad/len(xs)

print('prdeict (before training)',4,forward(4))

for epoch in range(100):

cost_val=cost(x_data,y_data)

grad_val=gradient(x_data,y_data)

w-=0.01*grad_val

print('epoch:',epoch,'w=',w,'loss=',cost_val)

print('predict (after training',4,forward(4))

#plt.plot(cost)

plt.plot(x_data,y_data)

#epoch.append(epoch)

#cost.append(cost(x_data,y_data))

#plt.plot(epoch_list,cost_list)

plt.ylabel('cost')

plt.xlabel('epoch')

plt.show()

There is a problem with the last diagram

版权声明

本文为[Know what you know and slowly understand what you don't know]所创,转载请带上原文链接,感谢

https://yzsam.com/2022/04/202204200609332775.html

边栏推荐

- C winfrom DataGridView click on the column header can not automatically sort the problem

- 开发consul 客户端即微服务

- How to use the project that created SVN for the first time

- MySQL 回表

- Subcontracting of wechat applet based on uni app

- Lightweight project management ideas

- Oracle updates the data of different table structures and fields to another table, and then inserts it into the new table

- Database Experiment four View experiment

- NVM introduction, NVM download, installation and use (node version management)

- Echerts add pie chart random color

猜你喜欢

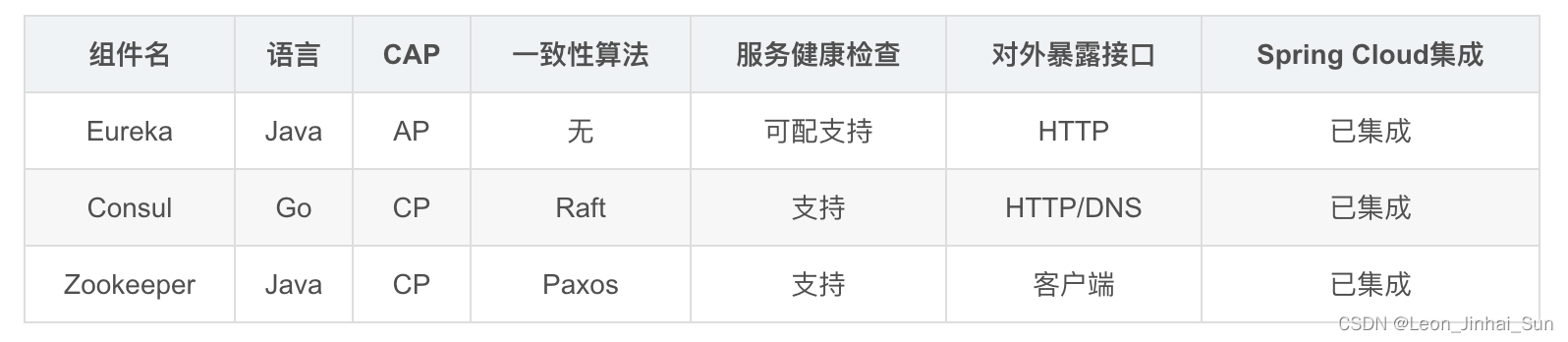

不同注册中心区别

Ribbon 服务调用

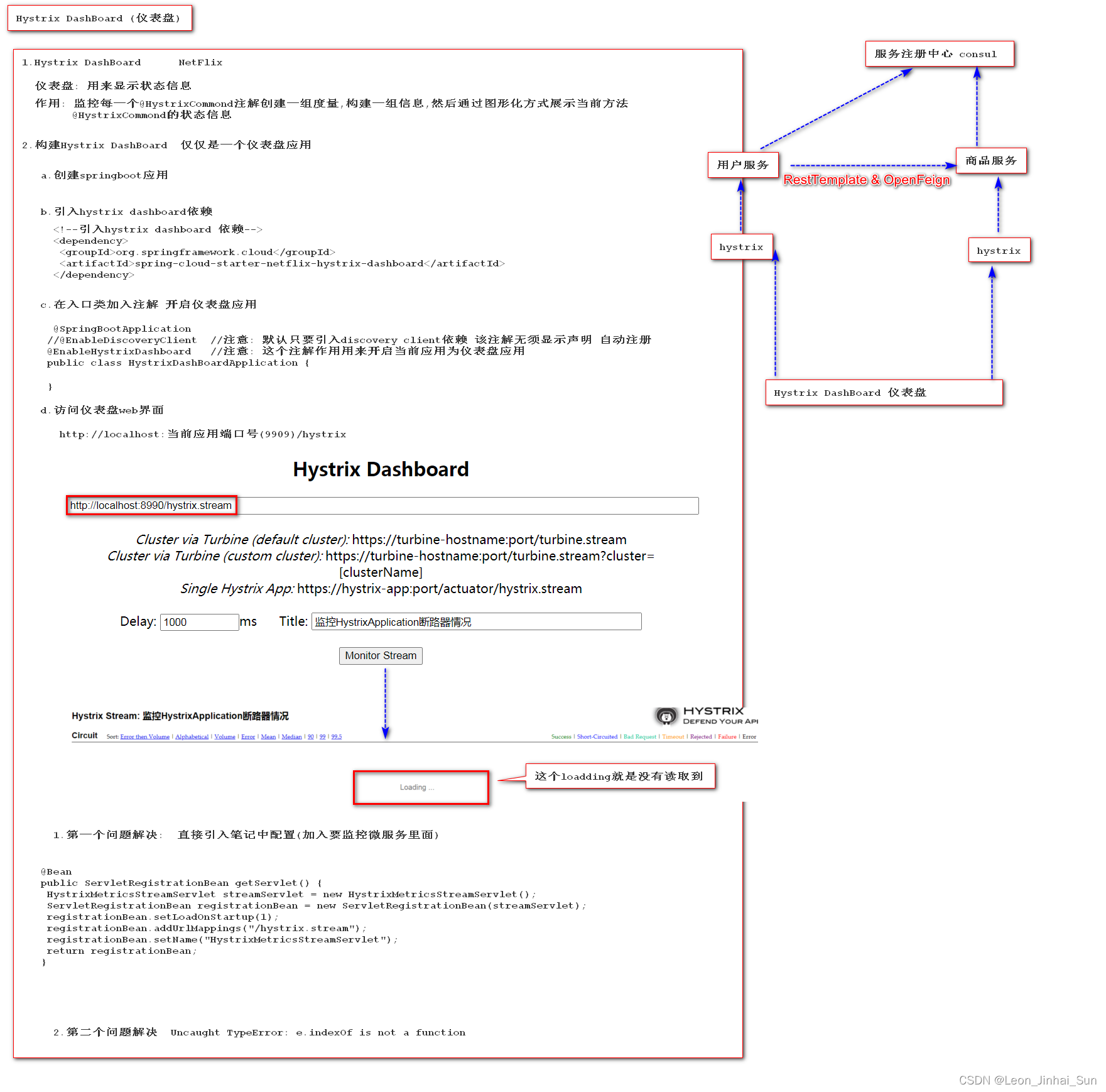

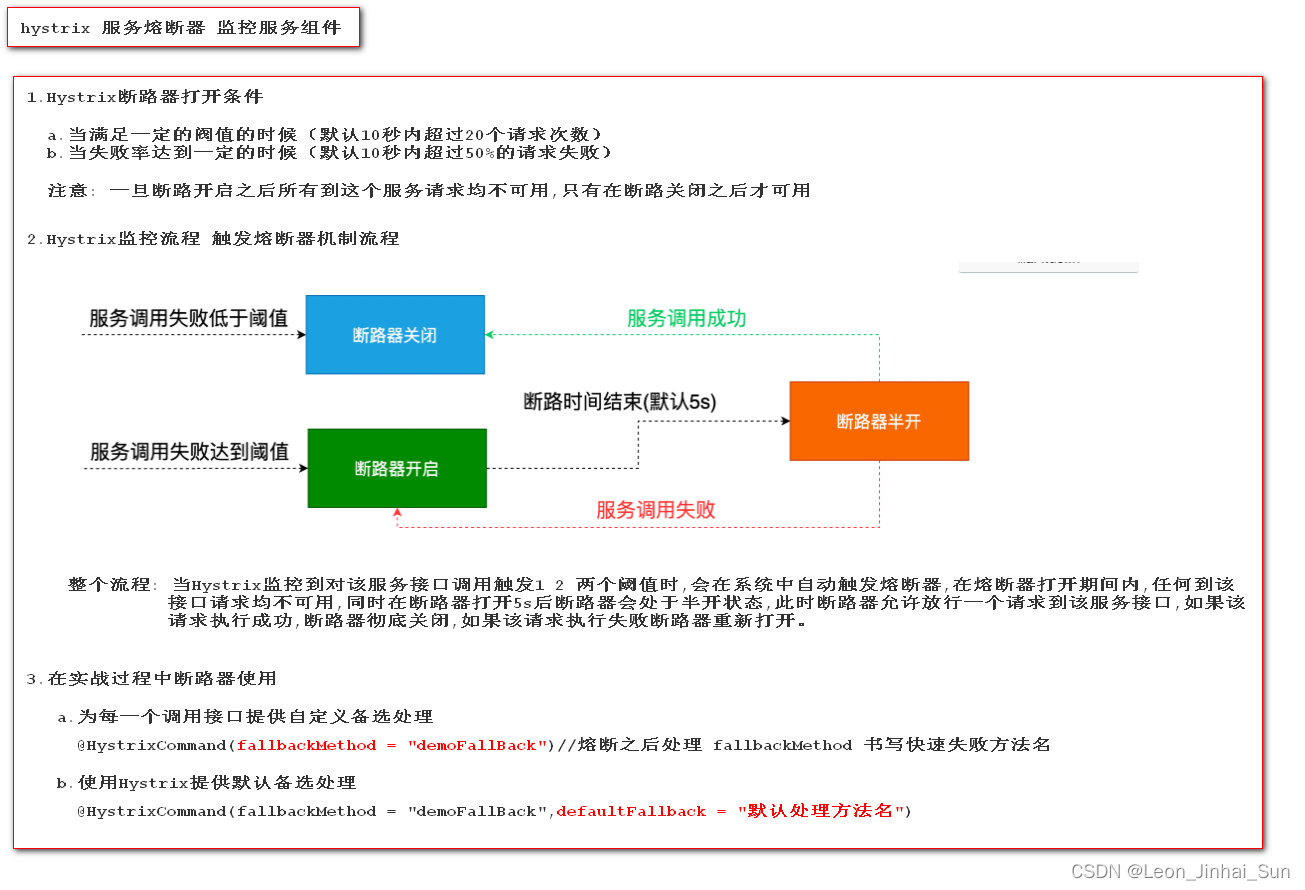

hystrix dashboard的使用

What if Jenkins forgot his password

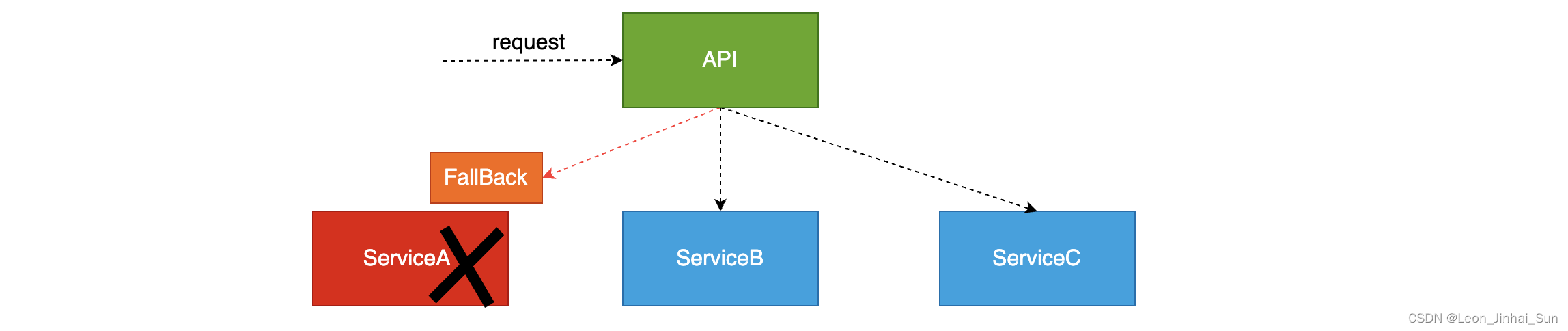

微服务系统中服务降级

Hystrix断路器开启条件和流程以及默认备选处理

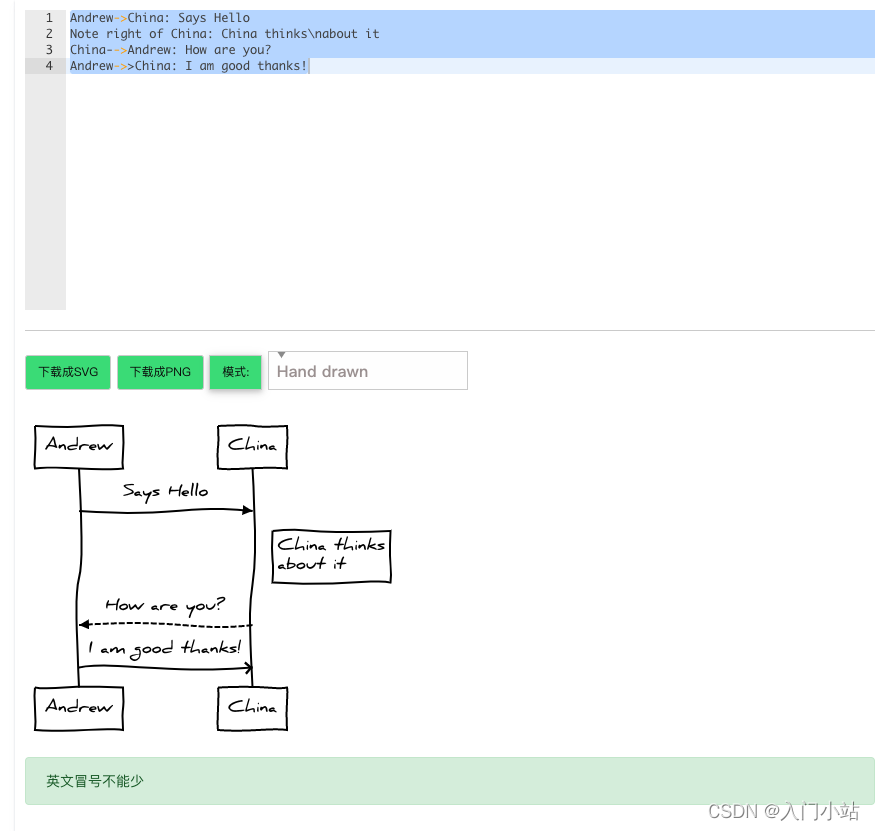

在线时序流程图制作工具

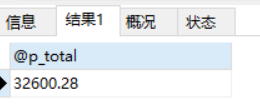

Database Experiment 7 stored procedure experiment

Plato farm is one of the four largest online IEOS in metauniverse, and the transaction on the chain is quite high

Plato Farm元宇宙IEO上线四大,链上交易颇高

随机推荐

Keras. Layers introduction to various layers

QT QML component library records owned by QML except basic components

资本追逐Near生态

不同注册中心区别

服务降级的实现

[leetcode sword finger offer 28. Symmetric binary tree (simple)]

Database Experiment 3 data update experiment

Oracle updates the data of different table structures and fields to another table, and then inserts it into the new table

JS to get the browser and screen height

服务间通信方式

JS prototype and prototype chain

Lightweight project management ideas

[leetcode sword finger offer 10 - II. Frog jumping steps (simple)]

Hystrix组件

April 24, 2022 Daily: current progress and open challenges of applying deep learning in the field of Bioscience

Handling of alternative solutions for openfeign integration with hystrix

A solution of C batch query

[leetcode refers to offer 42. Maximum sum of continuous subarrays (simple)]

Devops and cloud computing

Tencent cloud has two sides in an hour, which is almost as terrible as one side..