peptides.py

Physicochemical properties and indices for amino-acid sequences.

🗺️

Overview

peptides.py is a pure-Python package to compute common descriptors for protein sequences. It is a port of Peptides, the R package written by Daniel Osorio for the same purpose. This library has no external dependency and is available for all modern Python versions (3.6+).

🔧

Installing

Install the peptides package directly from PyPi which hosts universal wheels that can be installed with pip:

$ pip install peptides

💡

Example

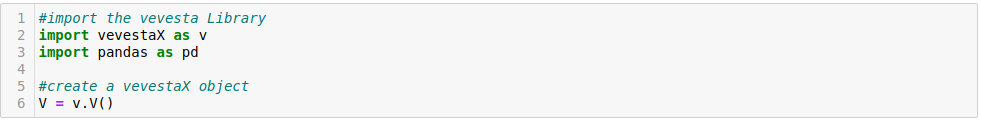

Start by creating a Peptide object from a protein sequence:

>>> import peptides

>>> peptide = peptides.Peptide("MLKKRFLGALAVATLLTLSFGTPVMAQSGSAVFTNEGVTPFAISYPGGGT")

Then use the appropriate methods to compute the descriptors you want:

>>> peptide.aliphatic_index()

89.8...

>>> peptide.boman()

-0.2097...

>>> peptide.charge(pH=7.4)

1.99199...

>>> peptide.isoelectric_point()

10.2436...

Methods that return more than one scalar value (for instance, Peptide.blosum_indices) will return a dedicated named tuple:

>>> peptide.ms_whim_scores()

MSWHIMScores(mswhim1=-0.436399..., mswhim2=0.4916..., mswhim3=-0.49200...)

Use the Peptide.descriptors method to get a dictionary with every available descriptor. This makes it very easy to create a pandas.DataFrame with descriptors for several protein sequences:

>>> seqs = ["SDKEVDEVDAALSDLEITLE", "ARQQNLFINFCLILIFLLLI", "EGVNDNECEGFFSAR"]

>>> df = pandas.DataFrame([ peptides.Peptide(s).descriptors() for s in seqs ])

>>> df

BLOSUM1 BLOSUM2 BLOSUM3 BLOSUM4 ... Z2 Z3 Z4 Z5

0 0.367000 -0.436000 -0.239 0.014500 ... -0.711000 -0.104500 -1.486500 0.429500

1 -0.697500 -0.372500 -0.493 0.157000 ... -0.307500 -0.627500 -0.450500 0.362000

2 0.479333 -0.001333 0.138 0.228667 ... -0.299333 0.465333 -0.976667 0.023333

[3 rows x 66 columns]

💭

Feedback

⚠️

Issue Tracker

Found a bug ? Have an enhancement request ? Head over to the GitHub issue tracker if you need to report or ask something. If you are filing in on a bug, please include as much information as you can about the issue, and try to recreate the same bug in a simple, easily reproducible situation.

🏗️

Contributing

Contributions are more than welcome! See CONTRIBUTING.md for more details.

⚖️

License

This library is provided under the GNU General Public License v3.0. The original R Peptides package was written by Daniel Osorio, Paola Rondón-Villarreal and Rodrigo Torres, and is licensed under the terms of the GPLv2.

This project is in no way not affiliated, sponsored, or otherwise endorsed by the original Peptides authors. It was developed by Martin Larralde during his PhD project at the European Molecular Biology Laboratory in the Zeller team.