当前位置:网站首页>Product Quantization (PQ)

Product Quantization (PQ)

2022-08-09 10:47:00 【qq_26391203】

How product quantization is used in image retrieval:

"' After quantitative learning, for a given query sample, the query sample and library can be calculated by looking up a tableAsymmetric distance of the samples in"'

A brief description of product quantization: The typical representative of vector quantization methods is the product quantization (PQ, Product

Quantization) method, which decomposes the feature space into Cartesian products of multiple low-dimensional subspaces, and then quantize each subspace individually.In the training phase, each subspace is clustered to obtain kk centroids (ie quantizers), and the Cartesian product of all these centroids constitutes a dense division of the whole space, and can ensure that the quantization error is relatively small;After quantitative learning, for a given query sample, the asymmetric distance between the query sample and the sample in the library can be calculated by looking up the table.Approximate Nearest Neighbor Search- K-means clustering algorithm: Clustering belongs to unsupervised learning, the previous regression, Naive Bayes, SVM, etc. all have the category label y, that is to say, the classification of the sample has been given in the sample.However, there is no y given in the clustered samples, only the feature x. For example, it is assumed that the stars in the universe can be represented as the point set clip_image002 [10] in the three-dimensional space.The purpose of clustering is to find the latent class y of each sample x and put together samples x of the same class y.For example, for the stars above, after clustering, the result is a cluster of stars. The points in the cluster are relatively close to each other, and the distance between the stars in the cluster is relatively far.

- Product quantization process idea: https://www.cnblogs.com/mafuqiang/p/7161592.html

边栏推荐

- String类型的字符串对象转实体类和String类型的Array转List

- CSDN的markdown编辑器语法完整大全

- 人物 | 从程序员到架构师,我是如何快速成长的?

- 10000以内素数表(代码块)

- unix环境编程 第十五章 15.10 POSIX信号量

- 史上最小白之《Word2vec》详解

- Multi-merchant mall system function disassembly 26 lectures - platform-side distribution settings

- linux mysql操作的相关命令

- RPN principle in faster-rcnn

- Official explanation, detailed explanation and example of torch.cat() function

猜你喜欢

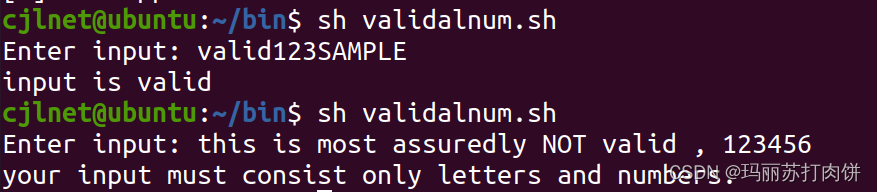

Shell script combat (2nd edition) / People's Posts and Telecommunications Press Script 2 Validate input: letters and numbers only

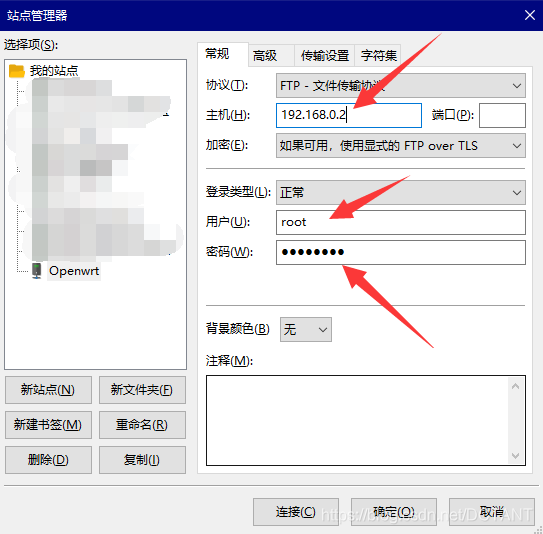

【原创】VMware Workstation实现Openwrt软路由功能,非ESXI,内容非常详细!

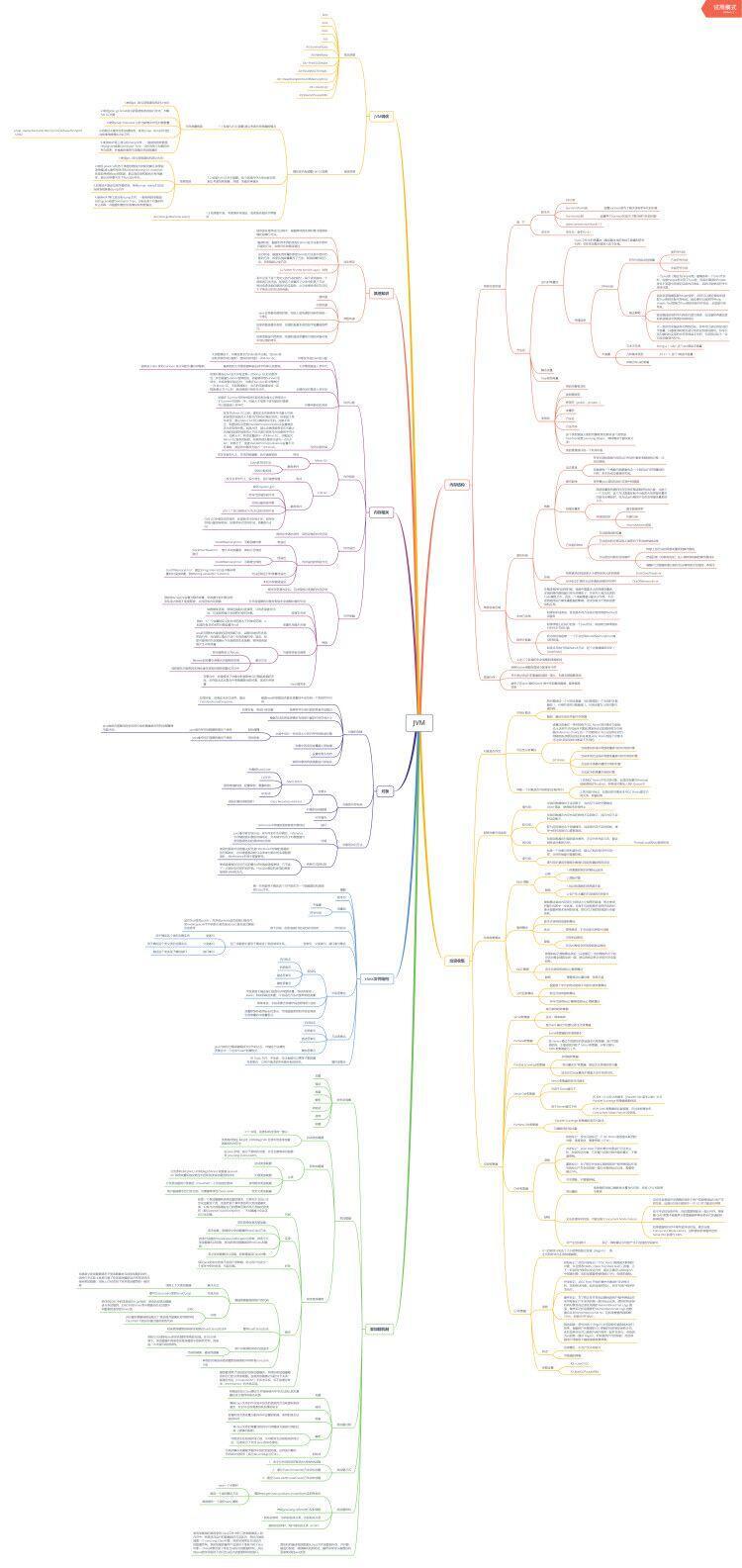

备战金三银四:如何成功拿到阿里offer(经历+面试题+如何准备)

OneNote 教程,如何在 OneNote 中搜索和查找笔记?

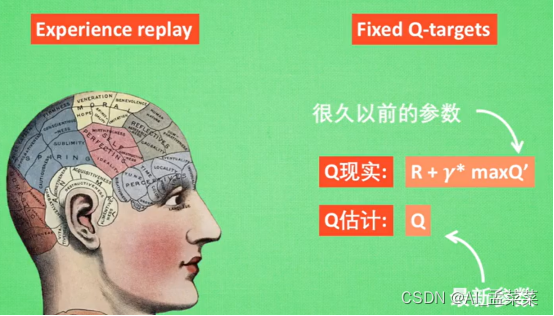

强化学习 (Reinforcement Learning)

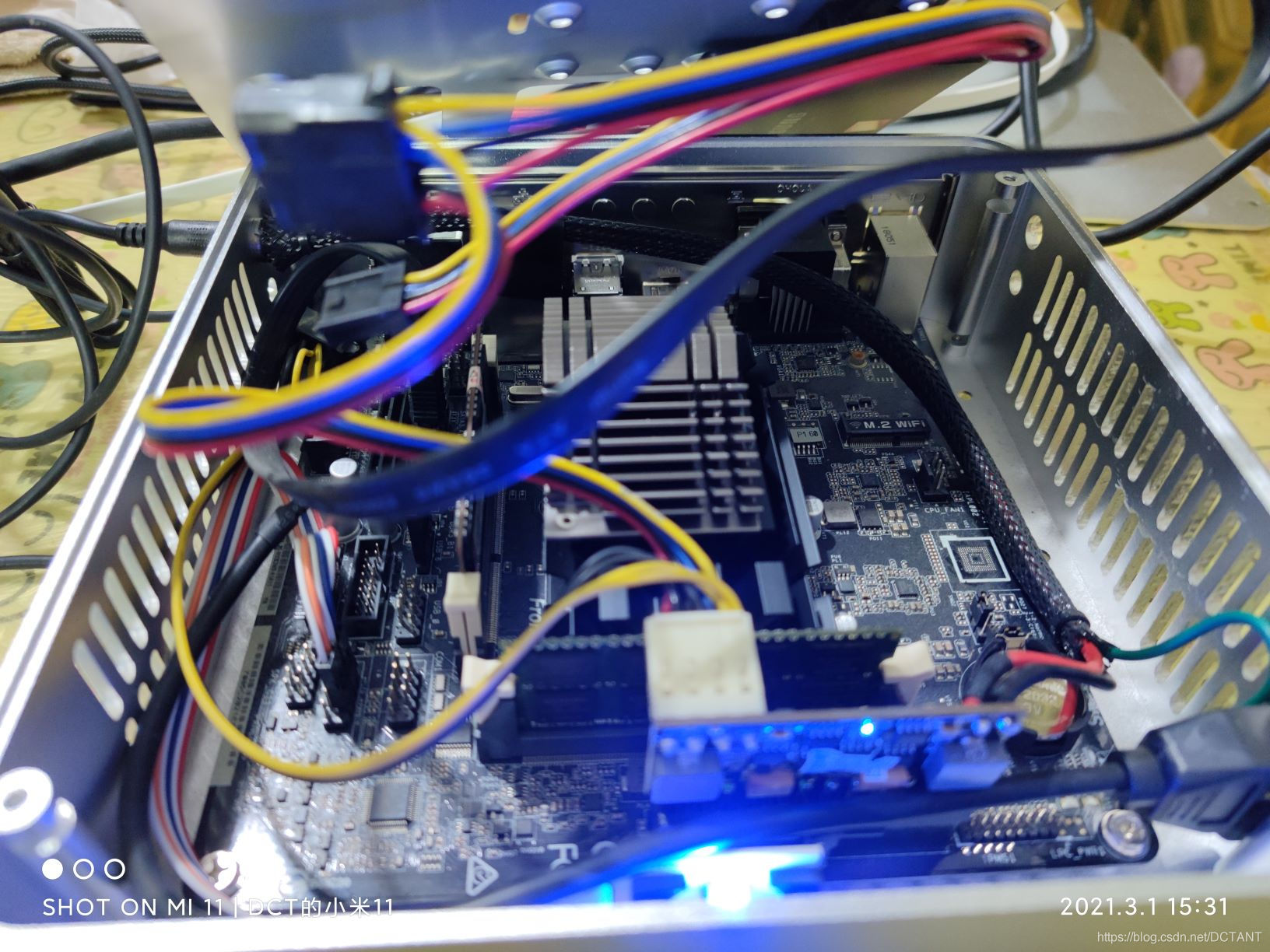

【报错记录】解决华擎J3455-ITX不插显示器无法开机的问题

人物 | 从程序员到架构师,我是如何快速成长的?

![[Error record] Solve the problem that ASRock J3455-ITX cannot be turned on without a monitor plugged in](/img/a9/d6aba07e6a4e1536cd10d91f274b2e.jpg)

[Error record] Solve the problem that ASRock J3455-ITX cannot be turned on without a monitor plugged in

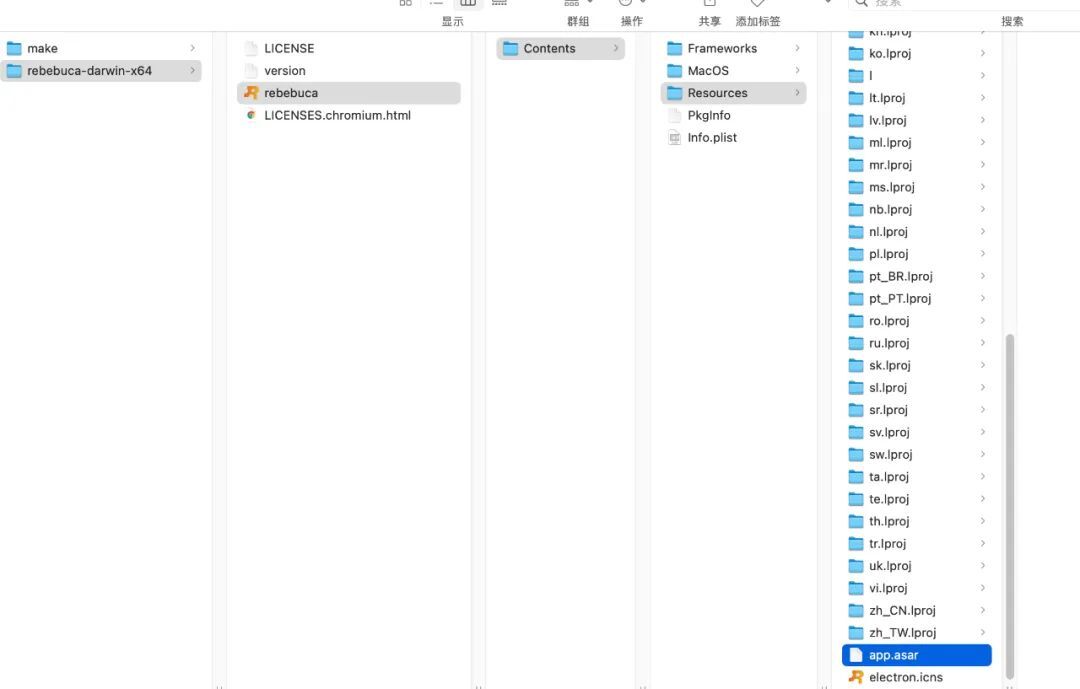

Electron application development best practices

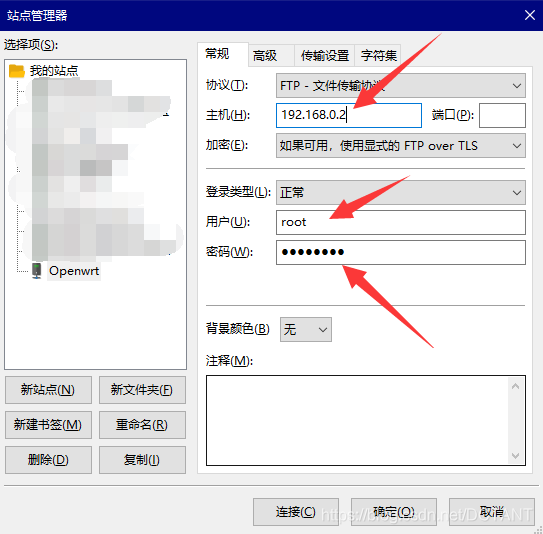

【 original 】 VMware Workstation implementation Openwrt soft routing, the ESXI, content is very detailed!

随机推荐

笔记本电脑使用常见问题,持续更新

faster-rcnn学习

985毕业,工作3年,分享从阿里辞职到了国企的一路辛酸和经验

unix环境编程 第十五章 15.5FIFO

The common problems in laptops, continuously updated

[Original] Usage of @PrePersist and @PreUpdate in JPA

1005 继续(3n+1)猜想 (25 分)

多商户商城系统功能拆解26讲-平台端分销设置

How tall is the B+ tree of the MySQL index?

可能95%的人还在犯的PyTorch错误

BERT预训练模型(Bidirectional Encoder Representations from Transformers)-原理详解

编解码(seq2seq)+注意机制(attention) 详细讲解

activemq message persistence

15.10 the POSIX semaphore Unix environment programming chapter 15

百度云大文件网页直接下载

Oracle数据库常用函数总结

torch.cat()函数的官方解释,详解以及例子

Received your first five-figure salary

商业技术解决方案与高阶技术专题 - 数据可视化专题

For versions corresponding to tensorflow and numpy, report FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecate