当前位置:网站首页>Deploying Robot Vision Models Using Raspberry Pi and OAK Camera

Deploying Robot Vision Models Using Raspberry Pi and OAK Camera

2022-08-11 09:33:00 【OAK China_Official】

编辑:OAK中国

首发:oakchina.cn

喜欢的话,请多多️

▌前言

Hello,大家好,这里是OAK中国,我是助手君.In the previous post in the circle of friends, I wanted to introduce a project of an automatic ball picking robot,This article is an extension of this project,More similar projects can refer to this article.

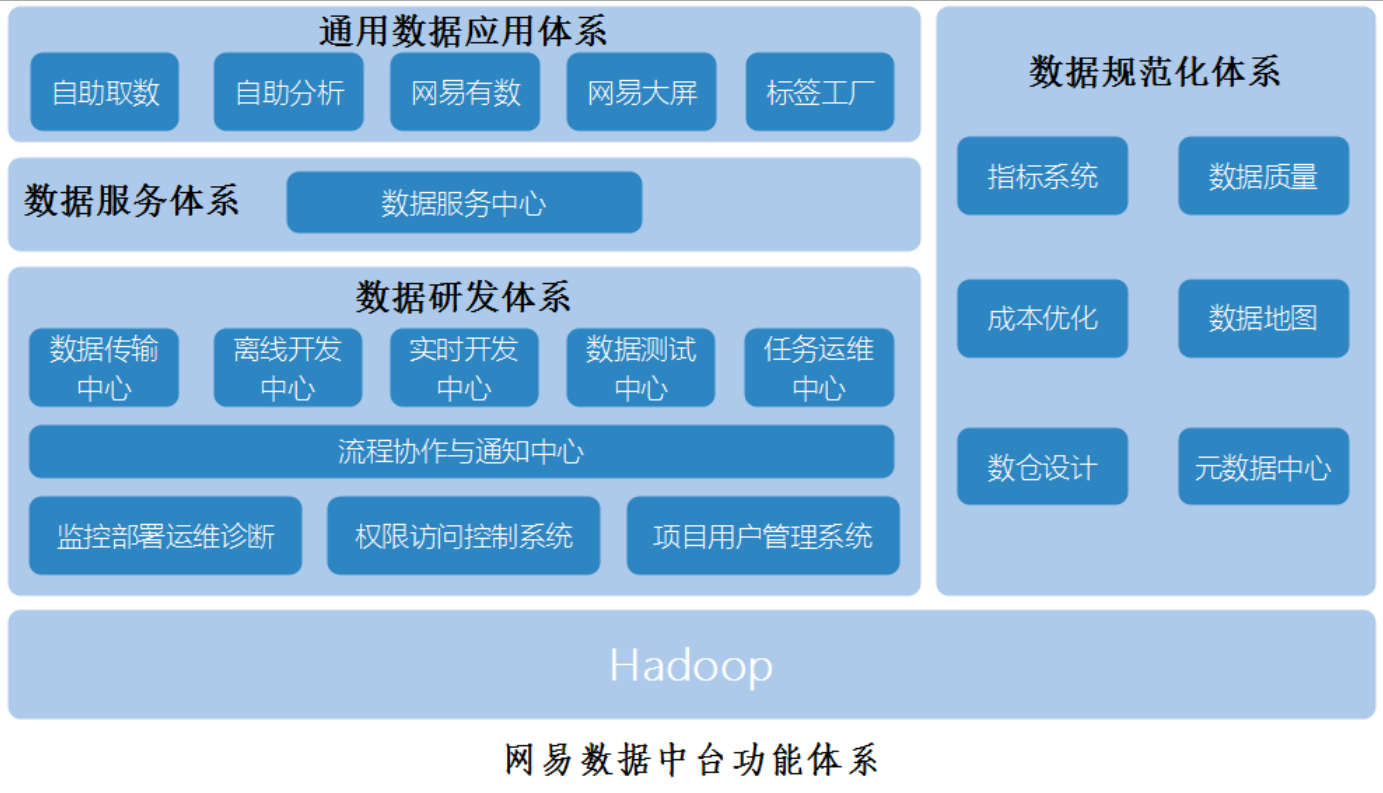

Computer vision enables robots to intelligently adapt to dynamic environments,通过Roboflow和一个OAK相机,You can develop and run powerful computer vision models on your robots.

在本指南中,我们将以FIRST机器人竞赛(FRC)为例,But the same setup can also be applied to a variety of robotic platforms and uses.

硬件准备

The hardware we need to use has:

- 树莓派4

- OAK相机(例如OAK-D-Lite、OAK-D-S2或者OAK-D Pro)

- 外围设备,如键盘和鼠标,to set up your Raspberry Pi.

请确保按照官方文档Set your Raspberry Pi to the latest versionRaspbian操作系统.Make sure your Raspberry Pi has a working internet connection,This way we can install the dependencies later.

Build a robotics dataset

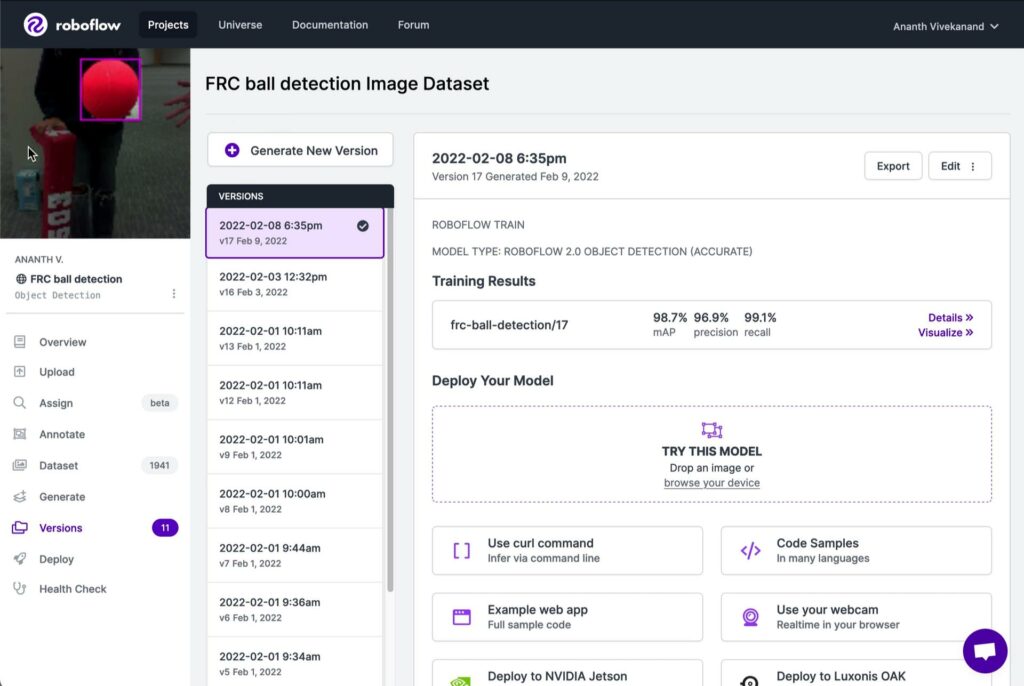

RoboflowProvides a convenient platform to annotate training data,创建数据集,And train powerful machine learning models.

登录roboflow后,You will see an option to create a workspace,This is where we store and manage images.

Our model learns to detect objects of interest by training on labeled examples.to collect images,We can take pictures of objects in real world scenes,and upload them to oursRoboflow工作区.例如,对于2022年FRC竞赛,This may be cargo placed in various locations around the venue.

One resource isRoboflow Universe,A growing collection of thousands of public datasets and models.例如,这里有一些其他公共FIRST数据集.

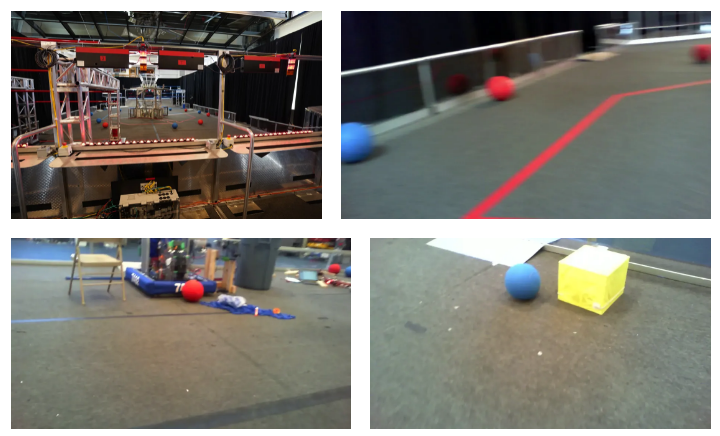

Below is what we shot and uploaded toRoboflow的一些照片:

Extend robotics datasets with augmentation and preprocessing

Preprocessing and augmentation help computer vision models become more powerful.在“Generate”Under the tabs you can choose the transformations to apply to your data.

Here are some enhancements you can use to aid your model training:

- 翻转:Helps your model to be insensitive to subject orientation.

- 灰度:This forces the model to learn classification without color input.

- 噪点:Adding noise helps the model to be more resilient to camera artifacts.

- 马赛克:Combine multiple training images together,Accuracy for smaller objects can be improved.

通过Roboflow的增强功能,You can generate Gundam for free3A dataset that is twice the size of the training data.

After the dataset is generated,它会在“Versions”pops up under the tab.在那里,你可以One-click training of robotic computer vision models.We will deploy it to our Raspberry Pi and OAK相机上.After you have trained your model,We can visualize its results.

安装依赖项

To get the Raspberry Pi software to work withOAKcamera interaction,It requires other software or dependencies to work.We can install them on the Raspberry Pi by running the following commands in the Terminal application:

sudo apt-get update

sudo apt-get upgrade

python3 -m pip install roboflowoak depthai opencv-pythonOAKThe camera also requires specific settings on the Raspberry Pi.Make sure it's set,You will also need to run the following commands in the terminal:

echo 'SUBSYSTEM=="usb", ATTRS{idVendor}=="03e7", MODE="0666"' | sudo tee /etc/udev/rules.d/80-movidius.rules

sudo udevadm control --reload-rules && sudo udevadm trigger

在OAKrun your trained model on

我们做了一个Github库,There are handy code snippets inside.Clone it by running the following command:

git clone https://github.com/roboflow-ai/roboflow-api-snippets.git

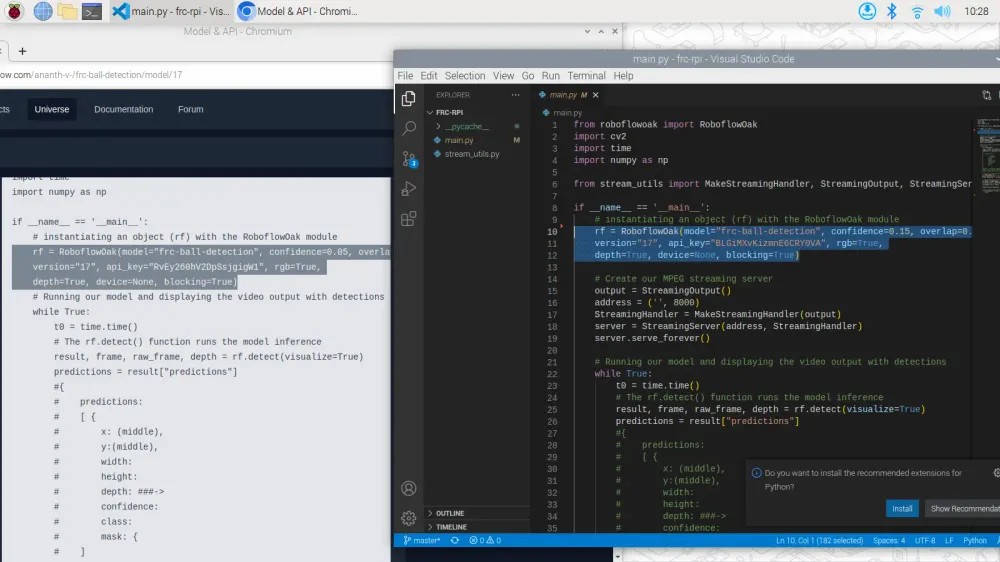

cd Python/LuxonisOak现在,打开文件main.pyand edit the section10行到第12行,Specify the model and version you want to run,以及你的API密匙.You can test the page by clicking on the model“Use with”下的“Luxonis OAK”tag to find it.We encourage you to tryOther deployment options.

To run the test,我们可以运行:

python3 ./main.py

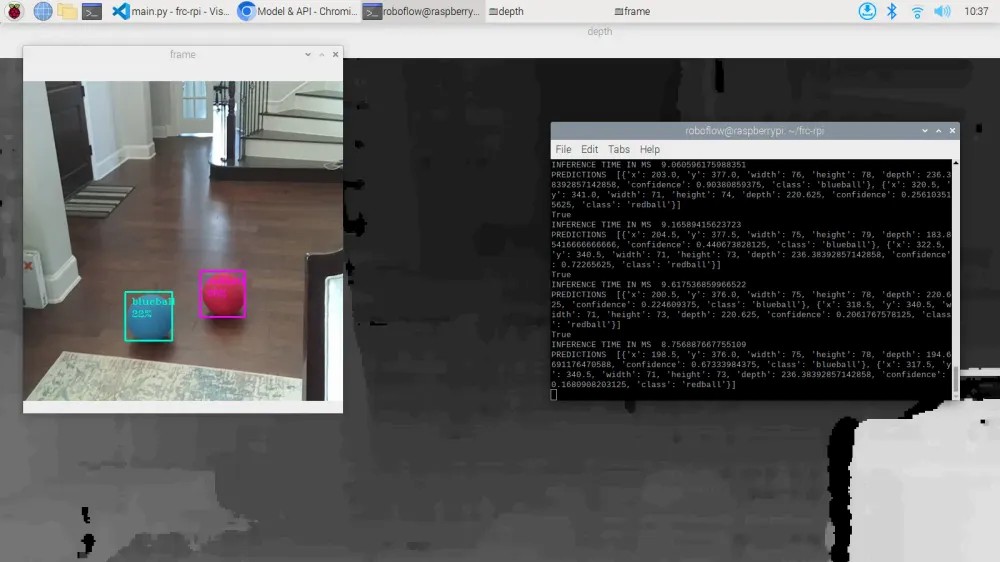

in the terminal output,We can see the cost of each inference10 ms,This means we are running at about every second100frame rate to run our model!

在此基础上构建

通过编辑main.pyPython脚本,You can write functional code for your bot.例如,你可以使用像networktables,Send the coordinates of detected objects to other devices over the local network.

By using a Raspberry Pi as a vision coprocessor,You can easily integrate this system into your existing robot control system.

▌参考资料

https://docs.oakchina.cn/en/latest/

https://www.oakchina.cn/selection-guide/

OAK中国

| OpenCV AI Kit在中国区的官方代理商和技术服务商

| 追踪AI技术和产品新动态

戳「+关注」获取最新资讯

边栏推荐

猜你喜欢

随机推荐

盘点四个入门级SSL证书

snapshot standby switch

Simple interaction between server and client

Primavera Unifier 高级公式使用分享

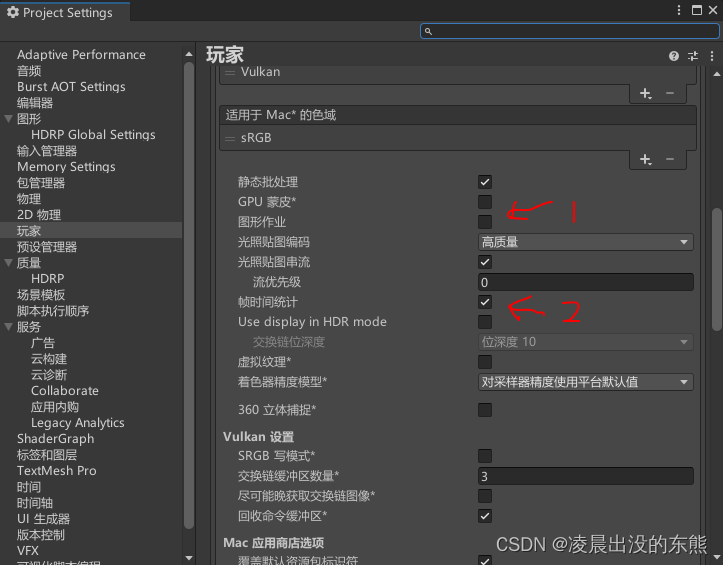

HDRP Custom Pass Shader 获取世界坐标和近裁剪平面坐标

Birth of the Go language

1.3版本自定义TrainOneStepCell报错

HDRP shader gets pixel depth value and normal information

unity shader 测试执行时间

collect awr

WooCommerce电子商务WordPress插件-赚美国人的钱

ES6: Expansion of Numerical Values

Contrastive Learning Series (3)-----SimCLR

5分钟快速为OpenHarmony提交PR(Web)

Typora和基本的Markdown语法

Primavera Unifier advanced formula usage sharing

DataGrip配置OceanBase

Go 语言的诞生

The no-code platform helps Zhongshan Hospital build an "intelligent management system" to realize smart medical care

QTableWidget 使用方法